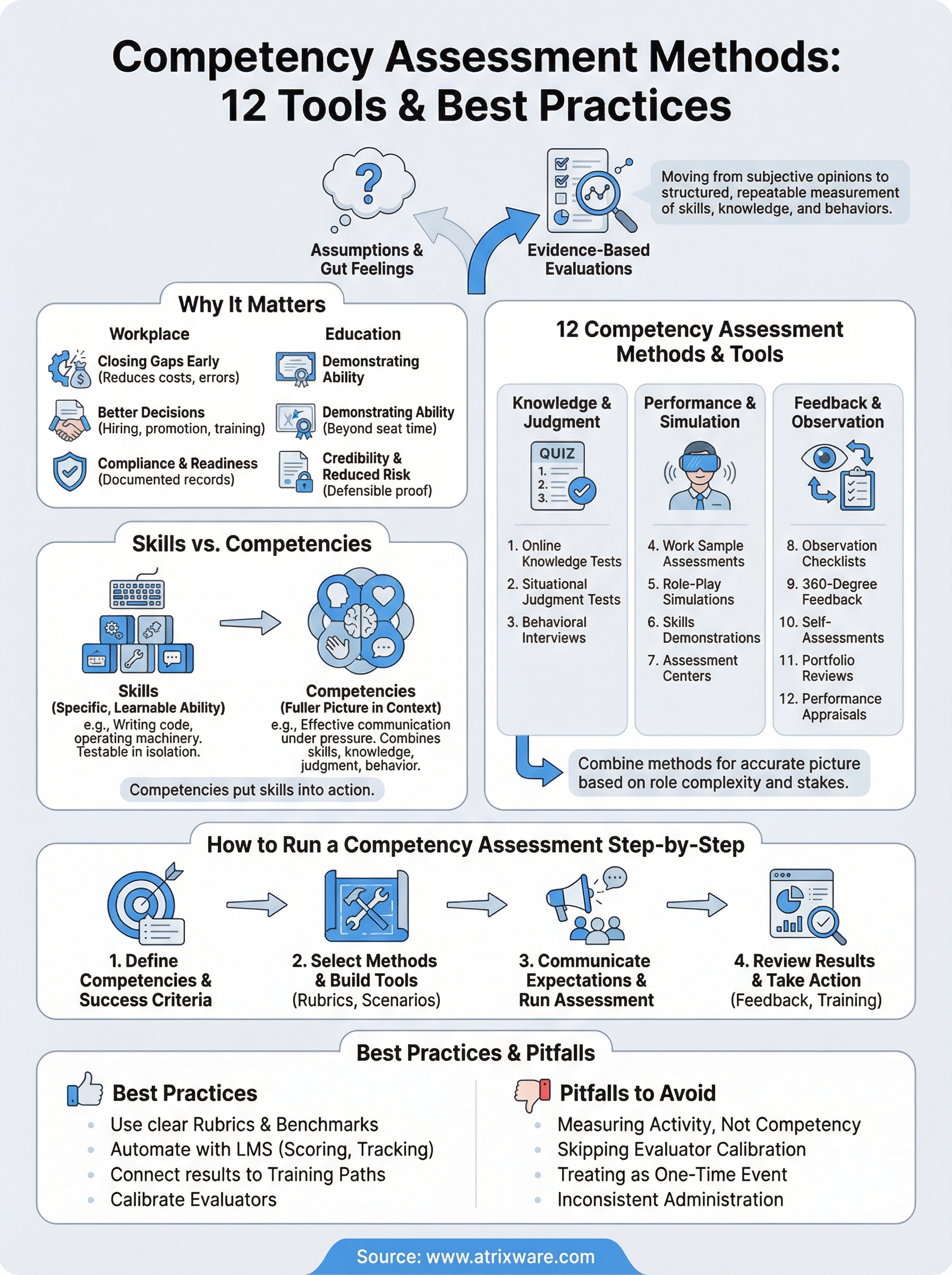

Hiring the right person, promoting the right manager, or certifying that an employee actually knows what they’re doing, all of these decisions hinge on one thing: how well you measure competency. Yet many organizations still rely on gut feelings or outdated annual reviews to make these calls. Competency assessment methods give you a structured, repeatable way to evaluate whether someone has the skills, knowledge, and behaviors a role demands. When done right, they reduce bias, surface skill gaps early, and connect training directly to business outcomes.

The challenge isn’t a shortage of options. It’s knowing which methods fit your goals, your team size, and your workflow. A 50-person company rolling out its first compliance training program has different needs than a global enterprise tracking certifications across dozens of departments. The method you choose shapes everything from how learners experience the evaluation to how accurately you can report on organizational readiness. That’s also where your technology stack matters, an LMS like Axis LMS from Atrixware can automate scoring, track competency progress over time, and tie assessment results back to specific training paths, turning raw data into action.

This guide breaks down 12 proven competency assessment tools and best practices, covering what each method looks like in practice, when to use it, and how to get the most from it. Whether you’re building a program from scratch or tightening one that already exists, you’ll walk away with a clear picture of which approaches match your training objectives, and how to implement them.

Why competency assessments matter at work and school

Organizations and institutions alike face the same core problem: someone performs a role or completes a course, but no one has a reliable way to confirm they can actually do what the role or credential demands. Competency assessments fix that by replacing assumptions with evidence-based evaluations. Whether you’re managing a sales team, onboarding new hires, or running a certification program, structured assessments give you a consistent baseline so every decision you make about people has real data behind it, not just a supervisor’s impression.

In the workplace: closing gaps before they cost you

Skill gaps are expensive. When employees lack the competencies their roles require, productivity drops, errors increase, and customers feel the impact before leadership does. Competency assessment methods give you a way to catch those gaps early, before they turn into turnover problems or compliance failures. Instead of waiting for an annual performance review, you can run targeted assessments after onboarding, after training, or at any point in the employee lifecycle where a role’s demands shift.

If you can’t measure a competency, you can’t manage it, and you certainly can’t improve it at scale.

Structured assessments also create a common language for performance conversations. When every manager uses the same rubric to evaluate the same skill, you eliminate the subjectivity that makes promotion decisions inconsistent and development conversations vague. Your HR and L&D teams can then use that standardized data to prioritize training investments where they’ll have the most impact, rather than guessing based on anecdotal feedback from a handful of team leads.

Beyond individual performance, workplace assessments drive organizational readiness. If your company needs to meet regulatory standards, for example FDA 21 CFR Part 11 or GDPR compliance requirements, documented competency records become your evidence that employees were trained and evaluated appropriately. Auditors don’t accept verbal assurances; they look for verifiable data tied to specific roles, specific learners, and specific dates. A well-designed assessment program gives you exactly that.

Assessments also support workforce planning in a direct way. When you know which competencies exist across your teams and which ones are missing, you can make informed decisions about hiring, internal mobility, and succession planning. That kind of visibility turns training from a checkbox activity into a strategic lever your organization can actually pull.

In education and formal training: certifying what learners can actually do

Competency-based education shifts the measure of success from time spent in a course to what a learner can demonstrate afterward. In traditional settings, a passing score on a multiple-choice test often signals completion rather than genuine understanding. Structured assessments change that by requiring learners to apply knowledge in realistic contexts, demonstrate a skill under observed conditions, or show how they’d respond to a scenario that reflects an actual job situation.

For training programs tied to professional certifications or continuing education units (CEUs), this distinction matters even more. Issuing a certificate to someone who hasn’t demonstrated the underlying competency isn’t just a credibility problem; it creates liability risk for the organization issuing it. Assessments that map directly to defined standards give learners clear targets and give your organization defensible proof that the certification represents real capability, not just participation.

Competency-based approaches also benefit learners who bring prior experience. When assessment results drive progression rather than seat time, experienced employees can demonstrate mastery quickly and move on to content that actually fills a gap. That respects their time, keeps engagement higher, and makes your training program more efficient overall.

Skills vs competencies and what you should measure

People use "skills" and "competencies" interchangeably, but they describe different things, and that distinction changes what you measure and how you measure it. A skill is a specific, learnable ability you can usually observe and test in isolation: writing SQL queries, operating a forklift, or delivering a customer refund within policy guidelines. Skills are the building blocks. Competencies are the fuller picture, combining skills with knowledge, judgment, and behavior to describe how someone performs in real job conditions.

What a skill captures

A skill is narrow by design. When you assess a skill, you’re asking a targeted question: can this person do this specific thing at an acceptable level? That makes skills straightforward to measure. A typing test, a safety certification exam, or a product knowledge quiz all evaluate discrete skills. The score tells you whether the person clears the threshold.

Skills-based measurement works well when a task is routine, standardized, and observable. If you’re onboarding a warehouse team and need to confirm everyone knows how to operate the inventory system, testing a specific skill gets you that answer quickly and cleanly.

What competencies add to the picture

Competencies take a skill and put it in context. Consider communication: the skill might be "writes clearly." The competency is "adjusts communication style to the audience, conveys complex information without jargon, and responds constructively to pushback." That’s not just one skill, it’s a cluster of behaviors tied to a performance standard for a specific role.

Measuring competencies gives you a more accurate picture of job performance than measuring skills alone, because roles demand judgment, not just ability.

Competency-based measurement is more complex to design, but it’s what most organizations actually need when they’re trying to predict job success, support promotions, or identify who’s ready for more responsibility.

Deciding what to measure in your program

Start by asking what the role actually demands. For frontline positions with clear, repeatable tasks, skills assessments cover most of what you need. For roles that involve decision-making, cross-functional work, or leadership, you need competency assessment methods that capture the full behavioral picture.

A practical approach is to map both layers: identify the core skills each role requires, then define the competencies that show how those skills come together under real working conditions. That structure gives you sharper training targets and more meaningful data when you score results and plan your next round of development.

The 12 competency assessment methods and tools

No single assessment covers everything a role demands. The most effective programs use a mix of competency assessment methods drawn from three broad categories: knowledge and judgment tests, performance-based evaluations, and feedback-driven approaches. Each category captures a different dimension of competency, and combining methods gives you a far more accurate picture than relying on any one tool alone.

| # | Method | Primary Use |

|---|---|---|

| 1 | Online knowledge tests | Verify factual recall and procedural understanding |

| 2 | Situational judgment tests | Assess decision-making in realistic scenarios |

| 3 | Behavioral interviews | Surface past performance as a predictor of future behavior |

| 4 | Work sample assessments | Evaluate output quality on real or simulated tasks |

| 5 | Role-play simulations | Test interpersonal and situational skills live |

| 6 | Skills demonstrations | Confirm hands-on technical or procedural ability |

| 7 | Assessment centers | Run multi-method evaluations for complex or senior roles |

| 8 | Observation checklists | Document behavior during actual job performance |

| 9 | 360-degree feedback | Gather multi-rater input on behavioral competencies |

| 10 | Self-assessments | Capture the learner’s own perception of their competency level |

| 11 | Portfolio reviews | Evaluate a curated collection of real work evidence |

| 12 | Performance appraisals | Measure competency outcomes through structured manager reviews |

Knowledge and judgment methods

Online knowledge tests and situational judgment tests are your fastest options for assessing large groups at once. Knowledge tests confirm that learners retained key information from training. Situational judgment tests go further by presenting a realistic scenario and asking what the learner would do, which surfaces decision-making quality rather than just recall.

Behavioral interviews follow the same logic but in conversation. Asking employees or candidates to describe past situations reveals how they’ve actually applied a competency under pressure, which is a stronger predictor of future performance than any hypothetical question.

Performance and simulation methods

These methods require learners to show, not just tell. Work sample assessments ask someone to complete an actual task, so you evaluate real output rather than self-reported ability. Role-play simulations and skills demonstrations introduce a controlled environment where you can observe behavior consistently across every candidate or employee.

When you need high-confidence assessments for senior roles or regulatory requirements, performance-based methods produce the most defensible evidence.

Assessment centers combine multiple approaches into one structured program. They take more time and resources to run, but they’re the most comprehensive option available for leadership evaluations or high-stakes credentialing decisions.

Feedback and observation methods

Observation checklists and 360-degree feedback both rely on watching behavior over time rather than capturing a single moment. Managers use observation checklists during actual job performance to confirm whether specific behaviors meet a defined standard. Self-assessments and portfolio reviews shift the lens to the learner, giving them an active role in documenting their own competency development.

Performance appraisals pull all of this together at a defined interval. They create a formal, time-stamped record that supports promotion decisions, development planning, and compliance documentation when auditors ask for proof that training translated into demonstrated capability.

How to choose the right method mix for each role

Picking a single assessment tool and applying it across every role in your organization is one of the most common mistakes in competency program design. Different roles carry different risk profiles, different task structures, and different behavioral demands, so the right combination of competency assessment methods shifts depending on what you’re actually trying to measure and how much is at stake if you get it wrong.

Match complexity to stakes

Start by mapping each role against two variables: how complex the work is and what happens when someone performs below the required standard. A frontline customer service rep making a mistake in a refund process carries far less risk than a compliance officer missing a regulatory requirement. For lower-complexity, lower-stakes roles, a well-designed knowledge test paired with an observation checklist during the first 90 days gives you a solid and efficient baseline.

When the cost of a competency gap is high, you need multiple methods that each approach the same capability from a different angle.

For high-stakes roles, layer in behavioral interviews, work sample assessments, or structured simulations so you’re capturing performance evidence from more than one vantage point. The overlap between methods surfaces inconsistencies that a single tool would miss entirely.

Factor in your resources and timeline

Your assessment design also has to work within real constraints. Running a full assessment center takes time, trained evaluators, and scheduling coordination, which is manageable for senior leadership hiring but impractical for onboarding 200 seasonal employees in three weeks. Honest about what your team can actually execute consistently and map your method choices to that capacity.

For large cohorts moving through training on a rolling basis, online knowledge tests and situational judgment tests give you scalable, scorable data without heavy facilitation overhead. Reserve the resource-intensive methods like portfolio reviews or 360-degree feedback for roles where that depth of evidence genuinely changes a decision, such as promotions, certification renewals, or high-visibility development programs.

Build a role-specific method matrix

A practical way to lock this in across your organization is to build a simple matrix that lists each role category, the core competencies that role demands, and the assessment methods assigned to each competency. This prevents ad hoc decisions every time a new cohort comes through and gives your managers and L&D team a repeatable reference.

Your matrix doesn’t need to be elaborate. Three columns covering role type, target competency, and selected assessment method are enough to create consistency, reduce evaluator bias, and give you comparable data across teams over time.

How to run a competency assessment step by step

Running an assessment without a clear process almost always produces data you can’t use. Inconsistent administration, unclear criteria, and missing follow-through turn what should be a useful evaluation into a box-checking exercise. Treating competency assessment methods as a structured process with defined stages keeps every assessment comparable, defensible, and tied to a real outcome you can act on.

Step 1: Define the competencies and success criteria

Before you build a single test question or schedule a single observation session, you need to pin down exactly what you’re measuring and what "good" looks like. Pull from the role’s job requirements, your organization’s competency framework, or regulatory standards that apply to the position. For each competency you’re assessing, write a clear behavioral definition and at least two or three observable indicators that confirm someone meets the standard. Without this foundation, your evaluators will each score differently, and your results won’t hold up under scrutiny.

Step 2: Select your methods and build your tools

Match your method choices to the competencies you defined in step one. Knowledge-based competencies call for tests or situational judgment questions. Behavioral and interpersonal competencies need observation checklists, simulations, or structured interviews. Once you’ve selected your methods, build the actual instruments: write questions, design rubrics, create observation forms, or script the scenario. Keep each tool tightly aligned to the behavioral indicators from step one so scoring stays consistent across every evaluator and every cohort.

The tighter the link between your assessment tool and your defined success criteria, the more reliable and actionable your results will be.

Step 3: Communicate expectations and run the assessment

Learners perform better and more authentically when they understand what the assessment covers and what standard they’re being held to. Send clear instructions ahead of time that explain the format, the timeline, and the evaluation criteria. Doing this also reduces the kind of anxiety that skews results in ways that have nothing to do with actual competency. During the assessment itself, keep conditions consistent across all participants: same instructions, same time limits, and the same guidance given to every evaluator so your data is genuinely comparable.

Step 4: Review results and take action

Collecting scores is not the finish line. Analyze results against your benchmarks, identify who falls below the defined threshold, and map those gaps directly to specific training interventions. Share individual results with employees promptly so the feedback is still connected to the experience. Document everything, including scores, dates, evaluator names, and planned follow-up actions, so you maintain a clean record for compliance audits, promotion decisions, or future assessment cycles.

How to score results with rubrics and benchmarks

Raw scores without context tell you almost nothing. A 78% on a knowledge test looks like a pass until you realize the passing threshold for that role is 85% because regulatory requirements demand a higher standard. Scoring your competency assessment methods well means building two things in advance: a rubric that defines what each performance level looks like, and a benchmark that tells you where the line between acceptable and not acceptable sits for each specific role.

Build a rubric that ties scores to observable behavior

Your rubric is the bridge between what an evaluator sees and what the score reflects. Each level in your rubric needs a behavioral description, not just a number, so two different evaluators watching the same performance reach the same conclusion. A four-point scale works well for most assessments: below standard, approaching standard, meets standard, and exceeds standard. For each level, write two or three specific observable indicators so your evaluators aren’t guessing.

Rubrics eliminate the gap between what you intended to measure and what your evaluators actually score.

Keep your rubric tied directly to the success criteria you defined before building the assessment. If your rubric levels reference vague terms like "good communication" without specifying what that looks like in context, evaluator drift will undermine your data over time, especially as new managers or facilitators join the program.

| Performance Level | Description |

|---|---|

| Below Standard | Does not demonstrate the required behavior in observed conditions |

| Approaching Standard | Shows partial demonstration; requires coaching or additional practice |

| Meets Standard | Demonstrates the behavior consistently and at the required level |

| Exceeds Standard | Demonstrates the behavior at a higher level than the role currently demands |

Set benchmarks that reflect real role demands

Benchmarks answer the question every assessor eventually asks: what score is good enough? Start by working with subject matter experts and managers to define the minimum acceptable performance for each competency in each role. Your benchmark shouldn’t be arbitrary; it should reflect the actual consequence of performing below that level, whether that’s a compliance risk, a quality problem, or a customer impact.

Different roles warrant different benchmarks even when the competency label looks the same. A "problem-solving" benchmark for a junior analyst will sit at a lower threshold than the same competency assessed for a senior operations manager. Build separate benchmark tables for each role category and store them alongside your rubrics so every evaluator is working from the same standard and your results stay comparable across cohorts and assessment cycles.

Common pitfalls and how to avoid them

Even well-intentioned assessment programs fail when the design skips critical steps or the execution drifts from the original plan. Knowing where these programs break down helps you build yours in a way that holds up under real conditions, not just on paper. The pitfalls below appear repeatedly across organizations of all sizes, and each one is avoidable when you address it before the program launches rather than after results start coming in.

Measuring activity instead of competency

One of the most common mistakes is designing competency assessment methods around completion rather than demonstrated ability. Tracking whether someone finished a course or attended a session tells you nothing about what they can actually do when the situation demands it. Your assessments need to require learners to produce evidence of competency, not just confirm they showed up.

Fix this by reviewing each assessment instrument before rollout and asking a single question: does this tool require the learner to apply the competency or just recall information about it? If the answer is recall only, redesign the tool to include a performance task, scenario, or behavioral prompt that surfaces real capability.

Skipping calibration across evaluators

When multiple managers or facilitators score the same assessments without calibration, your results reflect who did the scoring as much as they reflect learner performance. One evaluator grades generously, another applies a stricter standard, and your data becomes impossible to compare across teams or cohorts.

Calibration is not optional when you need results that hold up to scrutiny during a promotion cycle or compliance audit.

Before each assessment cycle, bring your evaluators together to score the same sample responses using your rubric. Discuss any gaps in how they applied the scoring criteria and align on shared interpretations. This single step reduces evaluator drift significantly and gives your results the consistency they need to drive real decisions.

Treating assessment as a one-time event

A single assessment at the end of a training program captures a moment, not a trajectory. Competencies develop over time, and a learner who scores below standard in month one may be fully capable by month three if they receive targeted support. Relying on one data point leaves you without the information you need to course-correct.

Build structured reassessment checkpoints into your program calendar so you can track progress, confirm that training interventions actually worked, and update competency records as people grow into their roles. This also gives you the longitudinal data that makes workforce planning and succession decisions far more defensible than a single snapshot ever could.

How software and an LMS support competency assessments

Running competency assessment methods manually works at a small scale, but it breaks down quickly when you’re managing dozens of roles, hundreds of learners, and ongoing reassessment cycles. Purpose-built software and a learning management system handle the administrative burden that would otherwise consume your L&D team’s time, giving you accurate, up-to-date competency data without the friction of spreadsheets and email chains.

Automate scoring and track progress at scale

A capable LMS like Axis LMS scores knowledge tests and situational judgment questions instantly, applies your defined benchmarks automatically, and flags learners who fall below the passing threshold without requiring a manager to chase down results. That automation removes human error from the scoring process and ensures every learner gets evaluated against the same standard, whether they’re taking the assessment on day one or completing a recertification three years later.

When your LMS connects assessment scores to individual learner records automatically, you turn a single data point into a longitudinal performance history that actually supports workforce decisions.

Tracking progress over time is where software earns its value most clearly. Your assessors can see at a glance whether a learner improved after a targeted intervention, which cohorts are clearing benchmarks consistently, and which competency areas show persistent gaps across a team or department. That visibility turns your competency data from a static snapshot into a tool your managers and L&D leads can act on in real time.

Connect assessment results to training paths

One of the most practical advantages of managing competency assessments inside an LMS is the ability to link a failed assessment directly to a remedial training path without any manual setup on your end. A learner who scores below standard on a compliance competency can be automatically enrolled in a targeted module, complete the additional training, and trigger a reassessment, all within the same system that captured the original score.

That closed-loop process keeps learners moving forward and ensures gaps don’t sit unaddressed because a manager forgot to follow up. It also gives compliance officers and auditors a clean, documented chain of evidence: the initial assessment date, the score, the corrective training completed, and the reassessment result, all tied to a specific learner and a specific role requirement. For organizations subject to regulatory standards, that traceable record is not a nice-to-have, it is the documentation that confirms your training program actually produces verified competency, not just completion rates.

Next steps

You now have a clear map of the 12 competency assessment methods covered in this guide, from knowledge tests and situational judgment tools to observation checklists and 360-degree feedback. The next move is to match that map to your specific roles and start with one or two methods where your current process has the most obvious gap. Trying to overhaul everything at once is where programs stall; picking a focused starting point keeps momentum going.

Once you have your method mix defined, the right tools make the difference between a program that scales and one that collapses under its own administrative weight. Axis LMS handles the scoring, tracking, and training path automation that manual processes simply can’t sustain as your organization grows. If you’re not sure where you stand with your current setup, take the LMS readiness quiz to find out which stage of the purchase process fits your situation and what your next practical step looks like.